Software Stop Tracker Overview Covering Miksostop and Monitoring Feedback

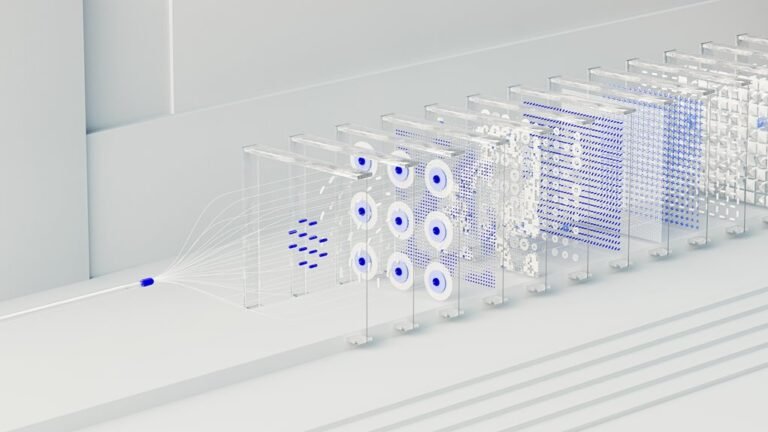

A Software Stop Tracker provides end-to-end visibility into where software operations halt, stall, or fail to progress, with a focus on signal quality, timeliness, and incident impact. It integrates with Miksostop to correlate stop events with observed failures, identify patterning, and streamline alert routing while preserving evolving monitoring practices. By emphasizing latency, coverage, and ownership, it translates stop-tracking data into actionable reliability improvements. The framework invites scrutiny of feedback loops and how they drive proactive maintenance, leaving a path forward unexplored.

What Is a Software Stop Tracker and Why It Matters

A software stop tracker is a tool that records and analyzes the points at which software operations halt, stall, or fail to progress.

It provides visibility into failures, latency, and recovery moments.

How Miksostop Fits Into the Monitoring Ecosystem

How does Miksostop integrate with the monitoring ecosystem to enhance reliability? Miksostop plugs into existing telemetry, correlating stop events with observed failures. It identifies mistake patterns and streamlines alert routing, reducing noise and accelerating responses. The approach emphasizes interoperability, minimal overhead, and clear ownership, enabling teams to maintain resilience while preserving freedom to evolve monitoring practices.

Key Metrics to Track for Stop-Tracking Performance

Key metrics for stop-tracking performance focus on measuring signal quality, timeliness, and impact on incident response.

The assessment emphasizes latency visibility to detect delays in signal propagation and evaluation of alert routing efficiency, ensuring alerts reach the correct responders promptly.

Structured dashboards summarize reliability, coverage, and actionability, enabling objective comparisons while preserving freedom to optimize processes.

Interpreting Feedback to Improve Reliability and Uptime

Feedback interpretation translates observed stop-tracking performance into concrete reliability improvements.

The analysis distills reliability insights from failures and near-misses, mapping trends to actionable steps.

It emphasizes disciplined monitoring of uptime signals, distinguishing transient disturbances from systemic issues.

Decisions rely on verifiable data, clear thresholds, and documented responses, enabling proactive maintenance, concise reporting, and continuous reduction of downtime without compromising operator autonomy or system freedom.

Conclusion

In short, the Software Stop Tracker promises flawless observability, except for those rare moments when it doesn’t. By weaving Miksostop into the monitoring tapestry, teams gain the illusion of control while chasing ever-elusive latency targets. Feedback loops supposedly sharpen reliability, yet they gracefully confirm what operators already knew: incidents happen anyway. The irony lies in turning stoppages into strategy—proving uptime is a craft, not a state, and that more dashboards somehow equals fewer interruptions. Quite a paradox, neatly packaged.